Py3k status update #2

This is the second status update about my work on the py3k branch, which I can work on thanks to all of the people who donated to the py3k proposal.

Since my previous status update, things have improved a lot: first of all, I fixed the syntax of many more tests, which were failing on the branch because they used constructs which are no longer valid in Python 3, such as u'' strings, the print statement or the old except Exception, e syntax. I have to say that this work is tedious and not very rewarding, but it has to be done anyway, so that the real failures can stand up.

Then, I spent most of the rest of the time by killing features which are present in Python 2 and are gone in Python 3.

Some of them were easy and mechnical: for example, I removed all the function attributes such as func_code and func_closure, which has been renamed to __code__ and __closure__, and then I had to find and fix all the places which still expected the old ones.

Some were trickier: I removed support for the cmp function and the __cmp__ special method, but this also meant that I had to fix a few types which relied on it to be comparable (for example, did you know that the cells contained in __closure__ are comparable?). At the same time, I also removed the old behavior which in Python 2 allows us to compare arbitrary objects with <, > & co.: in Python 3 the only comparisons allowed between incompatible types are == and !=.

Speaking of old special methods, __hex__ and __oct__ are gone as well (and I didn't even know about their existence before removing them :-))

But the most important breakthrough was the removal of the _file module, containing the implementation of the file type in Python 2, which is now gone since in Python 3 files are handled by the _io module. Killing the module was not straightforward, because some of the importing logic was tightly tied to the internal implementation of files, so it needed some refactoring. Finally, I had to fix the marshal module to correctly detect text files vs. byte files.

Among these things, I fixed tons of smaller issues here and there. As a result, there are many fewer failing tests than a few weeks ago. Obviously the number itself does not mean much, because sometimes fixing a single test takes hours, and some other times by changing one line one fixes tens of tests. But at the end, seeing it dropping from 999 to 650 always is nice and rewarding :-).

The road for having a pypy3k is still long, but everything is going fine so far. Stay tuned for more updates!

cheers, Antonio

Py3k status update

First of all, many thanks to Amaury Forgeot d'Arc, who started the py3k branch months ago, and already implemented lots of features including e.g. switching to "unicode everywhere" and the int/long unification, making my job considerably easier :-)

I started to work on the branch at the last Leysin sprint together with Romain Guillebert, where we worked on various syntactical changes such as extended tuple unpacking and keyword-only arguments. Working on such features is a good way to learn about a lot of the layers which the PyPy Python interpreter is composed of, because often you have to touch the tokenizer, the parser, the ast builder, the compiler and finally the interpreter.

Then I worked on improving our test machinery in various way, e.g. by optimizing the initialization phase of the object space created by tests, which considerably speeds up small test runs, and adding the possibility to automatically run our tests against CPython 3, to ensure that what we are not trying to fix a test which is meant to fail :-). I also setup our buildbot to run the py3k tests nightly, so that we can have an up to date overview of what is left to do.

Finally I started to look at all the tests in the interpreter/ directory, trying to unmangle the mess of failing tests. Lots of tests were failing because of simple syntax errors (e.g., by using the no longer valid except Exception, e syntax or the old print statement), others for slightly more complex reasons like unicode vs bytes or the now gone int/long distinction. Others were failing simply because they relied on new features, such as the new lexical exception handlers.

To give some numbers, at some point in january we had 1621 failing tests in the branch, while today we are under 1000 (to be exact: 999, and this is why I've waited until today to post the status update :-)).

Before ending this blog post, I would like to thank once again all the people who donated to PyPy, who let me to do this wonderful job. That's all for now, I'll post more updates soon.

cheers, Antonio

Will the py3 branch be finally merged into main branch and the pypy will be albe to run both 2.x and 3.x depending on the boot switch or the 2.x support will be dropped or the will be no merge?

@Piotr: the work in the py3k branch is destroying some of the python2 semantics, so we won't merge the twos as long as we support python2 (and we'll do it for a long time, because pypy itself is written in python2).

The current plan is just to keep the development in parallel, and regularly merge "default" into "py3k".

Ciao Antonio,

very good news, i'm glad that my little monetary contribution allowed you to be paid to work on that. Keep us posted!

Cheers,

Alberto

@Alberto: thank you very much :-)

@Seb: not in the short/middle term (and it's unclear whether we want to go there at all)

Good luck preventing the python2 and python3 branches from convergng; it's what merges do, and the alternative is a lot of cherry-picking. A lot of codebases use feature-flipping instead.

@Echo: no, the plan is to regularly merge "default" (i.e. python2) into "py3k". Note that in "default" we are mostly developing other parts than the Python interpreter (e.g., the JIT compiler), so this should not be too much of a problem, although it will be annoying sometimes

A Larger Example for the Flow Graph Language

Part 4 of Comparing Partial Evaluation to Tracing

This is the fourth and final blog post in a series about comparing partial evaluation and tracing. We've come a long way: In the first post of the series I showed an interpreter for a small flow-graph language together with a partial evaluator it. In the second post I showed how a tracer for the same language works and how it relates to both execution and to partial evaluation. The third post described an optimizer for traces.

In this final post we can compare and contrast the two different approaches of tracing and partial evaluation by means of an example. The programs in the flow chart language seen so far have been rather small, so I want to give an example of a larger program: an interpreter for an extremely simple bytecode instruction set. I will look at how the partial evaluator deals with that interpreter, and what the tracer does with it. The code for that, as well as all the code of the series can be found here: https://paste.pocoo.org/show/550282/ (some small additions have been made, such as a nicer way to print traces).

A Bytecode Interpreter

Writing programs in the flow graph language is painful, but I still want to give an example that is a bit more interesting than the tiny ones that we've seen so far. The example is an interpreter for the bytecode of a very trivial register-based language. The language has four registers, one of which is an accumulator on which all the actual operations are performed.

The opcodes of the language are:

- jump_if_a, jumps to a target address when the accumulator is non-zero

- mov_a_r0, mov_a_r1, mov_a_r2 move the value of the accumulator to the respective register

- mov_r0_a, mov_r1_a, mov_r2_a move the value of a register to the accumulator

- add_r0_to_a, add_r1_to_a, add_r2_to_a add the value of the register to the accumulator

- decr_a decrement the accumulator

- return_a stop the program and print the accumulator

The interpreter has a main loop that reads the opcode at the current program counter, does a (lengthy) dispatch to the right bytecode via a series of if statements and then executes the right opcode. Afterwards the next opcode is treated equivalently.

Here is a part of the source code in the flow graph language. As pseudocode:

bytecode_loop:

opcode = bytecode[pc]

pc = pc + 1

c = opcode == 'jump_if_a'

if c goto op_jump_if_a else goto not_jump_if_a

# select the right bytecode via a long series of if statements

not_jump_if_a:

c = opcode == 'mov_a_r0'

if y goto op_mov_a_r0 else goto not_mov_a_r0

not_mov_a_r0:

c = opcode == 'mov_a_r0'

if y goto op_mov_a_r1 else goto not_mov_a_r1

...

# bytecode implementations

op_mov_a_r0:

r0 = a

goto bytecode_loop

op_jump_if_a:

c = a == 0

target = bytecode[pc]

pc += 1

if c goto bytecode_loop else goto op_jump_if_a_jump

op_jump_if_a_jump:

pc = target

goto bytecode_loop

...

And actually working, as Prolog facts (the full implementation can be found at the link above):

% bytecode dispatch loop

block(bytecode_loop,

op2(opcode, readlist, var(bytecode), var(pc),

op2(pc, add, var(pc), const(1),

op2(c, eq, var(opcode), const(jump_if_a),

if(c, op_jump_if_a, not_jump_if_a))))).

% select the right bytecode via a long series of if statements

block(not_jump_if_a,

op2(c, eq, var(opcode), const(mov_a_r0),

if(c, op_mov_a_r0, not_mov_a_r0))).

block(not_mov_a_r0,

op2(c, eq, var(opcode), const(mov_a_r1),

if(c, op_mov_a_r1, not_mov_a_r1))).

...

% bytecode implementations

block(op_jump_if_a,

op2(c, eq, var(a), const(0),

op2(target, readlist, var(bytecode), var(pc),

op2(pc, add, var(pc), const(1),

if(c, bytecode_loop, op_jump_if_a_jump))))).

block(op_jump_if_a_jump,

op1(pc, same, var(target),

promote(bytecode, bytecode_loop))).

block(op_mov_a_r0,

op1(r0, same, var(a), jump(bytecode_loop))).

...

The bytecode_loop block is the main dispatch loop. It reads an opcode out of the bytecode list at the program counter position, then has a long series of if statements that compares the current opcode to the various existing opcodes. The full code of the interpreter can be found under the link above.

The bytecodes of the interpreter don't really permit hugely complex programs, but it can be used to write a program that computes the square of a number with the following program:

mov_a_r0 # r0 = a mov_a_r1 # r1 = a # 2: mov_r0_a # r0-- decr_a mov_a_r0 mov_r2_a # r2 += a add_r1_to_a mov_a_r2 mov_r0_a # if r0!=0: goto 2 jump_if_a 2 mov_r2_a # return r2 return_a

Partially Evaluating the Bytecode Interpreter

The partial evaluator from the first blog post can be easily used to partially evaluate the bytecode interpreter. The static input is the bytecode for computing the square and the initial program counter value, as given above. The dynamic input are the content of the accumulator (the number to be squared). This can be done as follows:

?- bytecode_square(B),

Env = [bytecode/B, pc/0],

do_pe(bytecode_loop, Env, Label),

REnv = [a/16, r0/0, r1/0, r2/0],

interp(jump(Label), REnv), listing(block).

256

:- dynamic block/2.

<lots of generated code>

The code that is generated by the partial evaluation process is somewhat hard to read. It contains a lot of passages like this:

...

block(op_return_a1, print_and_stop(var(a))).

block(not_decr_a1, jump(op_return_a1)).

block(not_add_r2_to_a2, jump(not_decr_a1)).

block(not_add_r1_to_a2, jump(not_add_r2_to_a2)).

block(not_add_r0_to_a3, jump(not_add_r1_to_a2)).

block(not_mov_r2_a3, jump(not_add_r0_to_a3)).

block(not_mov_r1_a5, jump(not_mov_r2_a3)).

block(not_mov_r0_a5, jump(not_mov_r1_a5)).

block(not_mov_a_r27, jump(not_mov_r0_a5)).

block(not_mov_a_r18, jump(not_mov_a_r27)).

block(not_mov_a_r09, jump(not_mov_a_r18)).

block(not_jump_if_a11, jump(not_mov_a_r09)).

block(bytecode_loop12, jump(not_jump_if_a11)).

block(op_mov_r2_a2, op1(a, same, var(r2), jump(bytecode_loop12))).

...

I.e. lots of blocks that do nothing but jump to another block, interspersed with some blocks that contain an actual operation. I cleaned the output up manually and got something like the following (this sort of cleanup is something a good partial evaluation system would do itself, after partial evaluation has occurred):

block(bytecode_loop1,

op1(r0, same, var(a),

op1(r1, same, var(a),

op1(a, same, var(r0),

op2(a, sub, var(a), const(1),

op1(r0, same, var(a),

op1(a, same, var(r2),

op2(a, add, var(a), var(r1),

op1(r2, same, var(a),

op1(a, same, var(r0),

op2(c, eq, var(a), const(0),

if(c, bytecode_loop11, op_jump_if_a_jump1)))))))))))).

block(bytecode_loop11,

op1(a, same, var(r2),

print_and_stop(var(a))).

block(op_jump_if_a_jump1,

op1(a, same, var(r0),

op2(a, sub, var(a), const(1),

op1(r0, same, var(a),

op1(a, same, var(r2),

op2(a, add, var(a), var(r1),

op1(r2, same, var(a),

op1(a, same, var(r0),

op2(c, eq, var(a), const(0),

if(c, bytecode_loop11, op_jump_if_a_jump1)))))))))).

What do we see here? The partial evaluator has generated a block bytecode_loop1, which corresponds to the initialization opcodes mov_a_r0 and mov_a_r1 together with one iteration of the loop. Then it either jumps to a copy of the main loop (label op_jump_if_a_jump1) or to block bytecode_loop11, which prints the result and then stops. The residual code does exactly what the bytecode did: It squares the accumulator then prints that. All the uses of the bytecode and pc variable are gone.

Why did the partial evaluator produce two copies of the main loop that look the same? The reason for that is that in the second copy, the additional static information target = 2 is known, where target is a variable in the interpreter source that stores the jump target, for very brief periods of time. This additional static information does not have any effect on the residual code, so the same code is uselessly generated twice. This is an example of overspecialization.

Tracing the Interpreter

In this section we will look at what happens if we try to trace the interpreter. The naive way of doing that yields traces that are not very useful, because they abort after one iteration. We will look at a way of avoiding this problem. The problems described in this section are at the core of the paper Tracing the meta-level: PyPy's tracing JIT compiler (that paper uses a slightly more advanced version of the bytecode interpreter as an example).

To trace the interpreter, it is useful to change the bytecode_loop block from above to always promote the bytecode and the pc variables, because without knowing them the trace produced is not really interesting. This is similar to making these variables static in the partial evaluation example above:

block(bytecode_loop,

promote(bytecode, bytecode_loop_promote_bytecode)).

block(bytecode_loop_promote_bytecode,

promote(pc, bytecode_loop_promote_pc)).

block(bytecode_loop_promote_pc,

op2(opcode, readlist, var(bytecode), var(pc),

op2(pc, add, var(pc), const(1),

op2(c, eq, var(opcode), const(0),

if(c, op_jump_if_a, not_jump_if_a))))).

...

The rest of the interpreter stays unchanged.

To trace the interpreter we would start naively at the bytecode_loop label, because that's the label in the interpreter that is jumped to most often (which a profiler could establish easily). The following command can be used for that (this output prints traces in a slightly more readable way than in previous blog posts):

?- bytecode_square(B),

A = 16, Env = [bytecode/B, pc/2, a/A, r0/A, r1/A, r2/0],

do_trace(bytecode_loop, Env).

trace

guard_value(bytecode,[mov_a_r0,mov_a_r1,mov_r0_a,decr_a,mov_a_r0,mov_r2_a,add_r1_to_a,mov_a_r2,mov_r0_a,jump_if_a,2,mov_r2_a,return_a],[],bytecode_loop_promote_bytecode)

guard_value(pc,2,[],bytecode_loop_promote_pc)

op2(opcode,readlist,var(bytecode),var(pc))

op2(pc,add,var(pc),const(1))

op2(c,eq,var(opcode),const(jump_if_a))

guard_false(c,[],op_jump_if_a)

op2(c,eq,var(opcode),const(mov_a_r0))

guard_false(c,[],op_mov_a_r0)

op2(c,eq,var(opcode),const(mov_a_r1))

guard_false(c,[],op_mov_a_r1)

op2(c,eq,var(opcode),const(mov_a_r2))

guard_false(c,[],op_mov_a_r2)

op2(c,eq,var(opcode),const(mov_r0_a))

guard_true(c,[],not_mov_r0_a)

op1(a,same,var(r0))

loop

opttrace

guard_value(bytecode,[mov_a_r0,mov_a_r1,mov_r0_a,decr_a,mov_a_r0,mov_r2_a,add_r1_to_a,mov_a_r2,mov_r0_a,jump_if_a,2,mov_r2_a,return_a],[],bytecode_loop_promote_bytecode)

guard_value(pc,2,[bytecode/[mov_a_r0,mov_a_r1,mov_r0_a,decr_a,mov_a_r0,mov_r2_a,add_r1_to_a,mov_a_r2,mov_r0_a,jump_if_a,2,mov_r2_a,return_a]],bytecode_loop_promote_pc)

op1(a,same,var(r0))

op1(bytecode,same,const([mov_a_r0,mov_a_r1,mov_r0_a,decr_a,mov_a_r0,mov_r2_a,add_r1_to_a,mov_a_r2,mov_r0_a,jump_if_a,2,mov_r2_a,return_a]))

op1(pc,same,const(3))

op1(opcode,same,const(mov_r0_a))

op1(c,same,const(1))

loop

256

B = [mov_a_r0, mov_a_r1, mov_r0_a, decr_a, mov_a_r0, mov_r2_a, add_r1_to_a, mov_a_r2, mov_r0_a|...],

A = 16,

Env = [bytecode/[mov_a_r0, mov_a_r1, mov_r0_a, decr_a, mov_a_r0, mov_r2_a, add_r1_to_a|...], pc/2, a/16, r0/16, r1/16, r2/0]

These traces are very short. They start with promoting the bytecode and the pc, followed by the execution of the opcode mov_r0_a, which is the one at position 2 in the given bytecode. Then they increment the pc and loop back to the beginning. Looking at the optimized trace, it is clear that the trace is essentially useless. It will run only for one iteration, because in the second iteration the pc is 3, thus the guard_value at the beginning will fail.

This problem can be solved by tracing more than just one iteration of the bytecode dispatch loop, which is called meta-tracing. To get this behaviour, in this simple example it is enough to start (and thus end) tracing at a different label, op_jump_if_a_jump. This label is hit when the interpreter executes a jump_if_a bytecode and the jump is taken. In a loop on the level of the executed bytecode program there is one such jump. Thus tracing from this label, a full loop in the bytecode program is traced, containing potentially many iterations of the bytecode dispatch loop in the control flow graph language.

Doing that yields the following:

?- bytecode_square(B),

A = 16, Env = [bytecode/B, pc/11, a/A, r0/A, r1/A, r2/0, target/2],

do_trace(op_jump_if_a_jump, Env).

trace

op1(pc,same,var(target))

guard_value(bytecode,[mov_a_r0,mov_a_r1,mov_r0_a,decr_a,mov_a_r0,mov_r2_a,add_r1_to_a,mov_a_r2,mov_r0_a,jump_if_a,2,mov_r2_a,return_a],[],bytecode_loop)

guard_value(bytecode,[mov_a_r0,mov_a_r1,mov_r0_a,decr_a,mov_a_r0,mov_r2_a,add_r1_to_a,mov_a_r2,mov_r0_a,jump_if_a,2,mov_r2_a,return_a],[],bytecode_loop_promote_bytecode)

guard_value(pc,2,[],bytecode_loop_promote_pc)

op2(opcode,readlist,var(bytecode),var(pc))

op2(pc,add,var(pc),const(1))

op2(c,eq,var(opcode),const(jump_if_a))

guard_false(c,[],op_jump_if_a)

op2(c,eq,var(opcode),const(mov_a_r0))

guard_false(c,[],op_mov_a_r0)

op2(c,eq,var(opcode),const(mov_a_r1))

guard_false(c,[],op_mov_a_r1)

op2(c,eq,var(opcode),const(mov_a_r2))

guard_false(c,[],op_mov_a_r2)

op2(c,eq,var(opcode),const(mov_r0_a))

guard_true(c,[],not_mov_r0_a)

op1(a,same,var(r0))

guard_value(bytecode,[mov_a_r0,mov_a_r1,mov_r0_a,decr_a,mov_a_r0,mov_r2_a,add_r1_to_a,mov_a_r2,mov_r0_a,jump_if_a,2,mov_r2_a,return_a],[],bytecode_loop_promote_bytecode)

guard_value(pc,3,[],bytecode_loop_promote_pc)

op2(opcode,readlist,var(bytecode),var(pc))

...

lots of operations ommitted

...

guard_value(bytecode,[mov_a_r0,mov_a_r1,mov_r0_a,decr_a,mov_a_r0,mov_r2_a,add_r1_to_a,mov_a_r2,mov_r0_a,jump_if_a,2,mov_r2_a,return_a],[],bytecode_loop_promote_bytecode)

guard_value(pc,9,[],bytecode_loop_promote_pc)

op2(opcode,readlist,var(bytecode),var(pc))

op2(pc,add,var(pc),const(1))

op2(c,eq,var(opcode),const(jump_if_a))

guard_true(c,[],not_jump_if_a)

op2(c,eq,var(a),const(0))

op2(target,readlist,var(bytecode),var(pc))

op2(pc,add,var(pc),const(1))

guard_false(c,[],bytecode_loop)

loop

opttrace

op1(pc,same,var(target))

guard_value(bytecode,[mov_a_r0,mov_a_r1,mov_r0_a,decr_a,mov_a_r0,mov_r2_a,add_r1_to_a,mov_a_r2,mov_r0_a,jump_if_a,2,mov_r2_a,return_a],[],bytecode_loop)

guard_value(pc,2,[bytecode/[mov_a_r0,mov_a_r1,mov_r0_a,decr_a,mov_a_r0,mov_r2_a,add_r1_to_a,mov_a_r2,mov_r0_a,jump_if_a,2,mov_r2_a,return_a]],bytecode_loop_promote_pc)

op1(a,same,var(r0))

op2(a,sub,var(a),const(1))

op1(r0,same,var(a))

op1(a,same,var(r2))

op2(a,add,var(a),var(r1))

op1(r2,same,var(a))

op1(a,same,var(r0))

op2(c,eq,var(a),const(0))

guard_false(c,[bytecode/[mov_a_r0,mov_a_r1,mov_r0_a,decr_a,mov_a_r0,mov_r2_a,add_r1_to_a,mov_a_r2,mov_r0_a,jump_if_a,2,mov_r2_a,return_a],pc/11,opcode/jump_if_a,target/2],bytecode_loop)

op1(bytecode,same,const([mov_a_r0,mov_a_r1,mov_r0_a,decr_a,mov_a_r0,mov_r2_a,add_r1_to_a,mov_a_r2,mov_r0_a,jump_if_a,2,mov_r2_a,return_a]))

op1(pc,same,const(11))

op1(opcode,same,const(jump_if_a))

op1(target,same,const(2))

op1(c,same,const(0))

loop

256

B = [mov_a_r0, mov_a_r1, mov_r0_a, decr_a, mov_a_r0, mov_r2_a, add_r1_to_a, mov_a_r2, mov_r0_a|...],

A = 16,

Env = [bytecode/[mov_a_r0, mov_a_r1, mov_r0_a, decr_a, mov_a_r0, mov_r2_a, add_r1_to_a|...], pc/11, a/16, r0/16, r1/16, r2/0, target/2] .

That looks better. The trace corresponds to the interpreter running all the bytecodes in the loop of the squaring function in the example bytecode above. The optimized code starts with two guards (checking that the bytecode is still the one for the squaring function, checking that the pc is 2) and then only does the operations that actually do the computation. No bytecode dispatching is performed, thus the interpretation overhead is fully removed, apart from the two guard_value operations at the beginning.

Many of the assignments in the trace are superfluous, e.g. all the copying back and forth between registers r1, r1, r2 and accumulator a. This could be easily solved by an even more intelligent optimization utilizing SSA form.

Conclusion About the Interpreter

Both partial evaluation and meta-tracing can be used to transform the example bytecode computing a square into a form that shows the essential computation that is going on, without the interpretation overhead. The naive partial evaluator produces lots of extra blocks that just jump around, which could be solved with a post-processing step. The tracer by itself produces uselessly short traces, but with a simple trick of starting the trace at a different point the results become a lot better.

In a real meta-tracing system, the meta-tracer would need a way for the author of the interpreter to mark which bytecode corresponds to a backward jump. It would also need better integration with the interpreter to start tracing automatically, as well as cache the traces. Additionally, it would have to deal better with guards that fail a lot, attaching new traces to the failing guards. However, all that is "just" engineering on top of the ideas presented in this series of blog posts.

High-Level Conclusion

Some concluding high-level thoughts about the similarities of tracing and partial evaluation: Tracing and partial evaluation try to tackle a similar problem, that of automatically reducing the interpreter overhead, their approaches are slightly different though.

Tracing is very close to normal evaluation, only keeping some extra information in the process. But then, the optimizer that is used in a tracer is again very similar in structure to a partial evaluator. The task of the optimizer is much simpler though, because it does not need to deal with control flow at all, just a linear list of operations.

So in a sense tracing is taking those parts of partial evaluation that work (the "just evaluate those things that you can, and leave the others") and replacing the parts that don't (controlling unfolding) by a much more pragmatic mechanism. That mechanism observes actual execution runs of the program to choose control flow paths that are typical. At the same time, the tracer's focus is on loops, because they are where most programs spend significant amounts of time.

Another point of view of tracing is that it is a form of partial evaluation that replaces the control components of a partial evaluator with an oracle (the actual execution runs) that provide the information which paths to look at.

Already in the quite trivial interpreter here the effects of this are visible. The simple partial evaluator over-specializes the loop and produces two identical versions of it, that aren't different. The tracer doesn't, and it also generates only code for the loop itself, not for the initialization opcodes.

That's it for this series. To those that made it, thanks for following along. Also thanks to Samuele and Sven, who consistently gave me good feedback on the posts before I put them here.

PyPy 1.8 - business as usual

We're pleased to announce the 1.8 release of PyPy. As habitual this release brings a lot of bugfixes, together with performance and memory improvements over the 1.7 release. The main highlight of the release is the introduction of list strategies which makes homogenous lists more efficient both in terms of performance and memory. This release also upgrades us from Python 2.7.1 compatibility to 2.7.2. Otherwise it's "business as usual" in the sense that performance improved roughly 10% on average since the previous release.

you can download the PyPy 1.8 release here:

https://pypy.org/download.html

What is PyPy?

PyPy is a very compliant Python interpreter, almost a drop-in replacement for CPython 2.7. It's fast (pypy 1.8 and cpython 2.7.1 performance comparison) due to its integrated tracing JIT compiler.

This release supports x86 machines running Linux 32/64, Mac OS X 32/64 or Windows 32. Windows 64 work has been stalled, we would welcome a volunteer to handle that.

Highlights

-

List strategies. Now lists that contain only ints or only floats should be as efficient as storing them in a binary-packed array. It also improves the JIT performance in places that use such lists. There are also special strategies for unicode and string lists.

-

As usual, numerous performance improvements. There are many examples of python constructs that now should be faster; too many to list them.

-

Bugfixes and compatibility fixes with CPython.

-

Windows fixes.

-

NumPy effort progress; for the exact list of things that have been done, consult the numpy status page. A tentative list of things that has been done:

- multi dimensional arrays

- various sizes of dtypes

- a lot of ufuncs

- a lot of other minor changes

Right now the numpy module is available under both numpy and numpypy names. However, because it's incomplete, you have to import numpypy first before doing any imports from numpy.

-

New JIT hooks that allow you to hook into the JIT process from your python program. There is a brief overview of what they offer.

-

Standard library upgrade from 2.7.1 to 2.7.2.

Ongoing work

As usual, there is quite a bit of ongoing work that either didn't make it to the release or is not ready yet. Highlights include:

- Non-x86 backends for the JIT: ARMv7 (almost ready) and PPC64 (in progress)

- Specialized type instances - allocate instances as efficient as C structs, including type specialization

- More numpy work

- Since the last release there was a significant breakthrough in PyPy's fundraising. We now have enough funds to work on first stages of numpypy and py3k. We would like to thank again to everyone who donated.

- It's also probably worth noting, we're considering donations for the Software Transactional Memory project. You can read more about our plans

Cheers,

The PyPy Team

As usual, excellent work!

The faster Pypy becomes, the less the need to use limited languages just for speed considerations.

List specialization is really cool and seems to boost performance and reduce memory usage considerably. I'd love seeing specializations for tuples of ints/floats/strings as structs.

On a side note, what stops people from using RPython as a compiled language (in terms of speed) with a nicer syntax?

Well done!

I find nothing on the comparison page about memory (maybe because it's called speed.pypy.org...). How are you stacking up against CPython there, on benchmarks and real word examples? I realize a JIT will always need some memory overhead, but perhaps you have done enough clever things now, like list strategies, to be competitive anyway?

I would donate to this.

Would this give us 'true' multithreading? a la clojure?

Seems like you guys are ahead of the curve with STM: https://arstechnica.com/business/news/2012/02/transactional-memory-going-mainstream-with-intel-haswell.ars

Did anybody test if it works with

pypy?

https://github.com/mvantellingen/psycopg2-ctypes

would be great to have out of the box postgresql support for Django

Just donated for py3k. I think it would make sense to allow donations for STM as well.

Introductory Article About RPython

"RPython, to my mind, is an astonishing project. It has, almost single-handedly, opened up an entirely new approach to VM implementation. As my experience shows, creating a decent RPython VM is not a huge amount of work (despite some frustrations). In short: never again do new languages need come with unusably slow VMs. That the the PyPy / RPython team have shown that these ideas scale up to a fast implementation of a large, real-world language (Python) is another feather in their cap."

My English is not very good, but I suspect "Introductionary" is not a word. I would use "introductory" instead.

It probably wasn't before, but now it is! (and a pretty nice word, no?)

Fixed.

Optimizing Traces of the Flow Graph Language

Part 3 of Comparing Partial Evaluation to Tracing

This is the third blog post in a series about comparing partial evaluation and tracing. In the first post of the series I introduced a small flow-graph language together with an interpreter for it. Then I showed a partial evaluator for the language. In the second post of the series I showed how a tracer for the same language works and how it relates to both execution and to partial evaluation. Then I added support for promotion to that tracer.

In this post I will show how to optimize the traces that are produced by the tracer and compare the structure of the optimizer to that of partial evaluation.

The code from this post can be found here: https://paste.pocoo.org/show/547304/

Optimizing Traces

In the last post we saw how to produce a linear trace with guards by interpreting a control flow graph program in a special mode. A trace always end with a loop statement, which jumps to the beginning. The tracer is just logging the operations that are done while interpreting, so the trace can contain superfluous operations. On the other hand, the trace also contains some of the runtime values through promotions and some decisions made on them which can be exploited by optimization. An example for this is the trace produced by the promotion example from the last post:

op2(c,ge,var(i),const(0),

guard_true(c,[],l_done,

guard_value(x,5,[],b2,

op2(x2,mul,var(x),const(2),

op2(x3,add,var(x2),const(1),

op2(i,sub,var(i),var(x3),

loop))))))

After the guard_value(x, 5, ...) operation, x is know to be 5: If it isn't 5, execution falls back to the interpreter. Therefore, operations on x after the guard can be constant-folded. To do that sort of constant-folding, an extra optimization step is needed. That optimization step walks along the trace, remembers which variables are constants and what their values are using a partial environment. The opimizer removes operations that have only constant arguments and leaves the others in the trace. This process is actually remarkably similar to partial evaluation: Some variables are known to be constants, operations on only constant arguments are optimized away, the rest remains.

The code for optimizing operations looks as follows:

optimize(op1(ResultVar, Op, Arg, Rest), PEnv, NewOp) :-

presolve(Arg, PEnv, RArg),

(RArg = const(C) ->

do_op(Op, C, Res),

write_env(PEnv, ResultVar, Res, NEnv),

NewOp = RestResidual

;

remove_env(PEnv, ResultVar, NEnv),

NewOp = op1(ResultVar, Op, RArg, RestResidual)

),

optimize(Rest, NEnv, RestResidual).

optimize(op2(ResultVar, Op, Arg1, Arg2, Rest), PEnv, NewOp) :-

presolve(Arg1, PEnv, RArg1),

presolve(Arg2, PEnv, RArg2),

(RArg1 = const(C1), RArg2 = const(C2) ->

do_op(Op, C1, C2, Res),

write_env(PEnv, ResultVar, Res, NEnv),

NewOp = RestResidual

;

remove_env(PEnv, ResultVar, NEnv),

NewOp = op2(ResultVar, Op, RArg1, RArg2, RestResidual)

),

optimize(Rest, NEnv, RestResidual).

Just like partial evaluation! It even reuses the helper functions presolve from the partial evaluator and a partial environment PEnv. When the arguments of the operation are known constants in the partial environment, the operation can be executed at optimization time and removed from the trace. Otherwise, the operation has to stay in the output trace. The result variable (as in the partial evaluator) needs to be removed from the partial environment, because it was just overwritten by an unknown result.

Now we need to deal with guards in the trace.

optimize(guard_true(V, [], L, Rest), PEnv, NewOp) :-

plookup(V, PEnv, Val),

(Val = const(C) ->

NewOp = RestResidual

;

NewOp = guard_true(V, PEnv, L, RestResidual)

),

optimize(Rest, PEnv, RestResidual).

optimize(guard_false(V, [], L, Rest), PEnv, NewOp) :-

plookup(V, PEnv, Val),

(Val = const(C) ->

NewOp = RestResidual,

NEnv = PEnv

;

write_env(PEnv, V, 0, NEnv),

NewOp = guard_false(V, PEnv, L, RestResidual)

),

optimize(Rest, NEnv, RestResidual).

When the variable that is being guarded is actually known to be a constant, we can remove the guard. Note that it is not possible that the guard of that constant fails: The tracer recorded the operation while running with real values, therefore the guards have to succeed for values the optimizer discovers to be constant.

guard_false is slightly different from guard_true: after the former we know that the argument is actually 0. After guard_true we only know that it is not equal to zero, but not which precise value it has.

Another point to note in the optimization of guards is that the second argument of the guard operation, which was so far always just an empty list, is now replaced by the partial environment PEnv. I will discuss further down why this is needed.

Optimizing guard_value is very similar, except that it really gives precise information about the variable involved:

optimize(guard_value(V, C, [], L, Rest), PEnv, NewOp) :-

plookup(V, PEnv, Val),

(Val = const(C1) ->

NewOp = RestResidual,

NEnv = PEnv

;

write_env(PEnv, V, C, NEnv),

NewOp = guard_value(V, C, PEnv, L, RestResidual)

),

optimize(Rest, NEnv, RestResidual).

This operation is the main way how the optimizer gains constant variables that it then exploits to do constant-folding on later operations. This is a chief difference from partial evaluation: There the optimizer knows the value of some variables from the start. When optimizing traces, at the beginning the value of no variable is known. Knowledge about some variables is only later gained through guards.

Now we are missing what happens with the loop statement. In principle, it is turned into a loop statement again. However, at the loop statement a few additional operations need to be emitted. The reason is that we optimized away operations and thus assignments when the result value of the variable was a constant. That means the involved variable still potentially has some older value. The next iteration of the loop would continue with this older value, which is obviously wrong. Therefore we need to emit some assignments before the loop statement, one per entry in the partial environment:

optimize(loop, PEnv, T) :-

generate_assignments(PEnv, T).

generate_assignments([], loop).

generate_assignments([Var/Val | Tail], op1(Var, same, const(Val), T)) :-

generate_assignments(Tail, T).

As an example of how generate_assignments assignments works, let's look at the following example. When the partial environment is, [x/5, y/10] the following assignments are generated:

?- generate_assignments([x/5, y/10], Out).

Out = op1(x, same, const(5), op1(y, same, const(10), loop)).

That's all the code of the optimizer. While the basic structure is quite similar to partial evaluation, it's a lot less complex as well. What made the partial evaluator hard was that it needs to deal with control flow statements and with making sure that code is reused if the same block is partially evaluated with the same constants. Here, all these complexities go away. The tracer has already removed all control flow and replaced it with guards and one loop operation at the end. Thus, the optimizer can simply do one pass over the operations, removing some (with some extra care around the loop statement).

With this machinery in place, we can optimize the trace from the promotion example of the last post:

?- optimize(

guard_value(x,3,[],b2,

op2(x2,mul,var(x),const(2),

op2(x3,add,var(x2),const(1),

op2(i,sub,var(i),var(x3),

op2(c,ge,var(i),const(0),

guard_true(c,[],l_done, loop)))))),

[],

LoopOut).

LoopOut = guard_value(x, 3, [], b2, op2(i, sub, var(i), const(7), op2(c, ge, var(i), const(0), guard_true(c, [x/3, x2/6, x3/7], l_done, op1(x, same, const(3), op1(x2, same, const(6), op1(x3, same, const(7), loop)))))))

More readably, the optimized version is:

guard_value(x, 3, [], b2,

op2(i, sub, var(i), const(7),

op2(c, ge, var(i), const(0),

guard_true(c, [x/3, x2/6, x3/7], l_done,

op1(x, same, const(3),

op1(x2, same, const(6),

op1(x3, same, const(7),

loop)))))))

As intended, the operations on x after the guard_value have all been removed. However, some additional assignments (to x, x2, x3) at the end have been generated as well. The assignments look superfluous, but the optimizer does not have enough information to easily recognize this. That can be fixed, but only at the cost of additional complexity. (A real system would transform the trace into static single assignment form to answer such questions.)

Resuming to the Interpreter

Why does the code above need to add the partial environment to the guards that cannot be optimized away? The reason is related to why we needed to generate assignments before the loop statement. The problem is that the optimizer removes assignments to variables when it knows the values of these variables. That means that when switching back from running the optimized trace to the interpreter, a number of variables are not updated in the environment, making the execution in the interpreter incorrect.

In the example above, this applies to the variables x2 and x3. When the second guard fails, they have not been assigned in the optimized case. Therefore, the guard lists them and their (always constant) values.

When switching back these assignments need to be made. Thus we need to adapt the resume_interp function from the last blog post as follows:

write_resumevars([], Env, Env).

write_resumevars([Key / Value | Rest], Env, NEnv) :-

write_env(Env, Key, Value, Env1),

write_resumevars(Rest, Env1, NEnv).

resume_interp(Env, ResumeVars, L) :-

write_resumevars(ResumeVars, Env, NEnv),

block(L, Block),

interp(Block, NEnv).

On resuming, the ResumeVars (a former partial environment) are simply added back to the normal environment before going back to the interpreter.

The data attached to guards about what needs to be done to resume to the interpreter when the guard fails is often a very complex part of a tracing system. The data can become big, yet most guards never fail. Therefore, most real systems try hard to compress the attached data or try to share it between subsequent guards.

Summary

In this post we have shown how to optimize traces by applying a variant of the partial evaluation principle: Perform all the operations that have only constant arguments, leave the others alone. However, optimizing traces is much simpler, because no control flow is involved. All the questions about control flow have already been solved by the tracing component.

In the next and final post of the series I will show a larger example of how tracing and partial evaluation can be used to optimize a small bytecode interpreter.

Almost There - PyPy's ARM Backend

Current Status

It has been a more than a year that I have been working on the ARM backend. Now it is in a shape, that we can measure meaningful numbers and also ask for some feedback. Since the last post about the backend we have added support floating point operations as well as for PyPy's framework GC's. Another area of work was to keep up with the constant improvements done in the main development branch, such as out-of-line guards, labels, etc. It has been possible for about a year to cross-translate the PyPy Python interpreter and other interpreters such as Pyrolog, with a JIT, to run benchmarks on ARM. Up until now there remained some hard to track bugs that would cause the interpreter to crash with a segmentation fault in certain cases when running with the JIT on ARM. Lately it was possible to run all benchmarks without problems, but when running the translation toolchain itself it would crash. During the last PyPy sprint in Leysin Armin and I managed to fix several of these hard to track bugs in the ARM backend with the result that, it is now possible to run the PyPy translator on ARM itself (at least unless until it runs out of memory), which is a kind of litmus test for the backend itself and used to crash before. Just to point it out, we are not able to complete a PyPy translation on ARM, because on the hardware we have currently available there is not enough memory. But up to the point we run out of memory the JIT does not hit any issues.Implementation Details

The hardware requirements to run the JIT on ARM follow those for Ubuntu on ARM which targets ARMv7 with a VFP unit running in little endian mode. The JIT can be translated without floating point support, but there might be a few places that need to be fixed to fully work in this setting. We are targeting the ARM instruction set, because at least at the time we decided to use it seemed to be the best choice in terms of speed while having some size overhead compared to the Thumb2 instruction set. It appears that the Thumb2 instruction set should give comparable speed with better code density but has a few restriction on the number of registers available and the use of conditional execution. Also the implementation is a bit easier using a fixed width instruction set and we can use the full set of registers in the generated code when using the ARM instruction set.The calling convention on ARM

The calling convention on ARM uses 4 of the general purpose registers to pass arguments to functions, further arguments are passed on the stack. The presence of a floating point unit is not required for ARM cores, for this reason there are different ways of handling floats with relation to the calling convention. There is a so called soft-float calling convention that is independent of the presence of a floating point unit. For this calling convention floating point arguments to functions are stored in the general purpose registers and on the stack. Passing floats around this way works with software and hardware floating point implementations. But in presence of a floating point unit it produces some overhead, because floating point numbers need to be moved from the floating point unit to the core registers to do a call and moved back to the floating point registers by the callee. The alternative calling convention is the so-called hard-float calling convention which requires the presence of a floating point unit but has the advantage of getting rid of the overhead of moving floating point values around when performing a call. Although it would be better in the long term to support the hard-float calling convention, we need to be able to interoperate with external code compiled for the operating system we are running on. For this reason at the moment we only support the soft-float to interoperate with external code. We implemented and tested the backend on a BeagleBoard-xM with a Cortex-A8 processor running Ubuntu 11.04 for ARM.Translating for ARM

The toolchain used to translate PyPy currently is based on a Scratchbox2. Scratchbox2 is a cross-compiling environment. Development had stopped for a while, but it seems to have revived again. We run a 32-bit Python interpreter on the host system and perform all calls to the compiler using a Scratchbox2 based environment. A description on how to setup the cross translation toolchain can be found here.Results

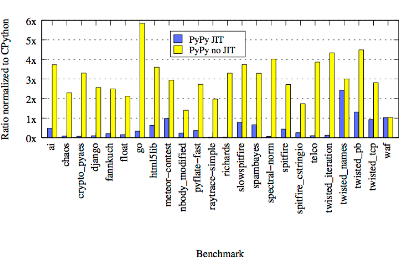

The current results on ARM, as shown in the graph below, show that the JIT currently gives a speedup of about 3.5 times compared to CPython on ARM. The benchmarks were run on the before mentioned BeagleBoard-xM with a 1GHz ARM Cortex-A8 processor and 512MB of memory. The operating system on the board is Ubuntu 11.04 for ARM. We measured the PyPy interpreter with the JIT enabled and disabled comparing each to CPython Python 2.7.1+ (r271:86832) for ARM. The graph shows the speedup or slowdown of both PyPy versions for the different benchmarks from our benchmark suite normalized to the runtime of CPython. The data used for the graph can be seen below.The speedup is less than the speedup of 5.2 times we currently get on x86 on our own benchmark suite (see https://speed.pypy.org for details). There are several possible reasons for this. Comparing the results for the interpreter without the JIT on ARM and x86 suggests that the interpreter generated by PyPy, without the JIT, has a worse performance when compared to CPython that it does on x86. Also it is quite possible that the code we are generating with the JIT is not yet optimal. Also there are some architectural constraints produce some overhead. One of these differences is the handling of constants, most ARM instructions only support 8 bit (that can be shifted) immediate values, larger constants need to be loaded into a register, something that is not necessary on x86.

| Benchmark | PyPy JIT | PyPy no JIT |

| ai | 0.484439780047 | 3.72756749625 |

| chaos | 0.0807291691934 | 2.2908692212 |

| crypto_pyaes | 0.0711114832245 | 3.30112318509 |

| django | 0.0977743245519 | 2.56779947601 |

| fannkuch | 0.210423735698 | 2.49163632938 |

| float | 0.154275334675 | 2.12053281495 |

| go | 0.330483034202 | 5.84628320479 |

| html5lib | 0.629264389862 | 3.60333138526 |

| meteor-contest | 0.984747426912 | 2.93838610037 |

| nbody_modified | 0.236969593082 | 1.40027234936 |

| pyflate-fast | 0.367447191807 | 2.72472422146 |

| raytrace-simple | 0.0290527461437 | 1.97270054339 |

| richards | 0.034575573553 | 3.29767342015 |

| slowspitfire | 0.786642551908 | 3.7397367403 |

| spambayes | 0.660324379456 | 3.29059863111 |

| spectral-norm | 0.063610783731 | 4.01788986233 |

| spitfire | 0.43617131165 | 2.72050579076 |

| spitfire_cstringio | 0.255538702134 | 1.7418593111 |

| telco | 0.102918930413 | 3.86388866047 |

| twisted_iteration | 0.122723986805 | 4.33632475491 |

| twisted_names | 2.42367797135 | 2.99878698076 |

| twisted_pb | 1.30991837431 | 4.48877805486 |

| twisted_tcp | 0.927033354055 | 2.8161624665 |

| waf | 1.02059811932 | 1.03793427321 |

The next steps and call for help

Although there probably still are some remaining issues which have not surfaced yet, the JIT backend for ARM is working. Before we can merge the backend into the main development line there are some things that we would like to do first, in particular it we are looking for a way to run the all PyPy tests to verify that things work on ARM before we can merge. Additionally there are some other longterm ideas. To do this we are looking for people willing to help, either by contributing to implement the open features or that can help us with hardware to test.The incomplete list of open topics:

- We are looking for a better way to translate PyPy for ARM, than the one describe above. I am not sure if there currently is hardware with enough memory to directly translate PyPy on an ARM based system, this would require between 1.5 or 2 Gig of memory. A fully QEMU based approach could also work, instead of Scratchbox2 that uses QEMU under the hood.

- Test the JIT on different hardware.

- Experiment with the JIT settings to find the optimal thresholds for ARM.

- Continuous integration: We are looking for a way to run the PyPy test suite to make sure everything works as expected on ARM, here QEMU also might provide an alternative.

- A long term plan would be to port the backend to ARMv5 ISA and improve the support for systems without a floating point unit. This would require to implement the ISA and create different code paths and improve the instruction selection depending on the target architecture.

- Review of the generated machine code the JIT generates on ARM to see if the instruction selection makes sense for ARM.

- Build a version that runs on Android.

- Improve the tools, i.e. integrate with jitviewer.

Awesome news. We might be able to donate some time in the Linaro validation lab to running tests, I'll see what we can do...

"Just to point it out, we are not able to complete a PyPy translation on ARM, because on the hardware we have currently available there is not enough memory."

Can't you just add more swap?

You wrote: "The speedup is less than the speedup of 5.2 times you currently get on x86."

The removed comment was meant to point out that the author of the blog post does not (and cannot) know the actual speedups (and slowdowns) people are getting on their machines.

The speedup of 5.2 you mentioned is contradicting my own experience.

I suggest you rewrite your sentence into "The speedup is less than the speedup of 5.2 times we currently get on x86."

"The speedup of 5.2 you mentioned is contradicting my own experience."

Did you run the very same benchmark suite or some arbitrary programs? If arbitrary programs then sorry, but it's seriously impossible for us to optimize stuff we don't know about. Please submit bug tracker issues for that.

I agree the speedup should be qualified "on our own benchmark suite", but if you don't contribute benchmarks, you can't complain.

@⚛ to avoid misunderstandings I updated the sentence in question to make it clear that I was comparing the performance of the ARM backend running our own benchmark suite to the results of the benchmarks as shown on speed.pypy.org.

Every time I visit comments to blog posts on this page I see some hater or two or even more who, don't know why, have wierd problems without a reason. People chill out, you get this brilliant piece of software and you do not have to pay for it.

@Naos: I do *not* hate PyPy. I like it and want to make it better. To do that, I am using a method different from your method. I would like the PyPy team to figure out how to run my benchmark faster without me disclosing the name of the benchmark. I believe that in the end this method will lead to a couple of universal optimizations in PyPy.

@⚛ Contrary to what you might believe, this ends up with you being annoying and nothing else. If you think we don't think all the time about general improvements, you're wrong, but pointless, unscientific complaining is not welcomed here.

will it work on the raspberry pi or does it use a different arm architecture?

(i am confused by arms. :) apparently it isn't like in the x86 world where everything is compatible with each other.)

@Anonymous

> The hardware requirements to run the JIT on ARM follow those for Ubuntu on ARM which targets ARMv7 with a VFP unit running in little endian mode.

This is higher than Raspberry Pi's ARMv6.

so someday it will support the v6 too? or isn't v5 and v6 compatible either?

@Anonymous ARMv6 should be backwards compatible to the ARMv5 ISA. So it should be possible to use the JIT on ARMv6 once it works for ARMv5.

Really nice with raspberry Pi being released and ARM proto boards gaining traction. Looking forward to develop w/ this on rPi

I have an arm system with enough swap, microsd or cifs or both... though a setup like this will probably take until next release to translate pypy, won't it?

Can also do usermode qemu of course.

@d.q. It would at least take until the next release, if not several...

A qemu based solution would be interesting. If you are interested I would propose you join #pypy on IRC to discuss

I'm pretty sure the guys over at Boundary Devices are making a SabreLite variant with 2GB ram.

https://boundarydevices.com/imx6-options-single-dual-core-2gb-ddr/

You may need to email them directly to purchase it still, but they're responsive and a pleasure to work with.

Anyone try to create a qemu based solution? Even if only a build system. I would be interested in having some documentation about how to build pypy for Raspberry Pi. I know it's a non-compatible ARM version but we have to start somewhere. Plus I have a very RPi's laying around to play with...

A Simple Tracer for the Flow Graph Language

Part 2 of Comparing Partial Evaluation to Tracing

This is the second blog post in a series about comparing partial evaluation and tracing. In the first post of the series I introduced a small flow-graph language together with an interpreter for it. Then I showed a partial evaluator for the language. In this post I will show how a tracer for the same language works and how it relates to both execution and to partial evaluation. The code from this post can be found here: https://paste.pocoo.org/show/543542/

Tracing Execution

The idea of a tracer (for the described language and also in general) is to do completely normal interpretation but at the same time keep a log of all the normal operations (i.e. non-control-flow operations) that were performed. This continues until the tracer executes the code block where it started at, in which case the trace corresponds to a closed loop. Then tracing stops and the last operation is replaced by a jump to the start. After tracing has ended, the trace can be executed, optionally optimizing it before that.

To write a tracer, we start from the rules of the interpreter, rename the predicate to trace and add some extra arguments. Thus, the following rules in the interpreter:

interp(op1(ResultVar, Op, Arg, Rest), Env) :-

resolve(Arg, Env, RArg),

do_op(Op, RArg, Res),

write_env(Env, ResultVar, Res, NEnv),

interp(Rest, NEnv).

interp(op2(ResultVar, Op, Arg1, Arg2, Rest), Env) :-

resolve(Arg1, Env, RArg1),

resolve(Arg2, Env, RArg2),

do_op(Op, RArg1, RArg2, Res),

write_env(Env, ResultVar, Res, NEnv),

interp(Rest, NEnv).

become the following rules in the tracer:

trace(op1(ResultVar, Op, Arg, Rest), Env, op1(ResultVar, Op, Arg, T), TraceAnchor) :-

resolve(Arg, Env, RArg),

do_op(Op, RArg, Res),

write_env(Env, ResultVar, Res, NEnv),

trace(Rest, NEnv, T, TraceAnchor).

trace(op2(ResultVar, Op, Arg1, Arg2, Rest), Env, op2(ResultVar, Op, Arg1, Arg2, T), TraceAnchor) :-

resolve(Arg1, Env, RArg1),

resolve(Arg2, Env, RArg2),

do_op(Op, RArg1, RArg2, Res),

write_env(Env, ResultVar, Res, NEnv),

trace(Rest, NEnv, T, TraceAnchor).

Note how the bodies of the trace rules correspond exactly to the bodies of the interp rules, the only difference is the recursive call to trace. The meaning of the arguments of trace is as follows: The first and second argument are the operation currently executed and the environment, like in the interpreter. The argument after that is an output argument that collects the currently traced operation, in the example above it is exactly like the operation that was executed. TraceAnchor is additional information about the trace that is being built right now, most of the time it is just handed on to the recursive call of trace. We will see later what it contains.

The rule for print_and_stop is very simple, as execution (and therefore also tracing) simply stops there:

trace(print_and_stop(V), Env, print_and_stop(V), _) :-

resolve(V, Env, Val),

print(Val), nl.

Left are the rules for the control operations jump and if. A trace linearizes one execution path, it contains no jumps. However, when a jump to the starting label is reached, tracing should stop. Therefore, the implementation of jump contains two cases:

trace(jump(L), Env, T, TraceAnchor) :-

(TraceAnchor = traceanchor(L, FullTrace) ->

T = loop,

write(trace), nl, write(FullTrace), nl,

do_optimize(FullTrace, OptTrace),

write(opttrace), nl, write(OptTrace), nl,

runtrace(OptTrace, Env, OptTrace)

;

block(L, Block),

trace(Block, Env, T, TraceAnchor)

).

Let's disect this code in small increments. First, we see what TraceAnchor is. It is a term of the form traceanchor(StartLabel, FullTrace). StartLabel is a label in the program where tracing started (and where it should end as well, when the loop is closed). The argument FullTrace is an accumulator which contains the full trace that is being built right now.

The condition at the start of the rule checks whether the taget-label L is the same as the one stored in the trace anchor. If that is the case, we can stop tracing. The rest of the trace T is assigned the operation loop, which jumps back to the beginning of the trace. Afterwards we print and optimize the trace, then run it, using the FullTrace part of the traceanchor.

If the label we jump to is not the StartLabel we simply continue tracing without recording any operation. This part of the rule is again extremely similar to the interpretation of jump.

For now, we will not use any interesting optimizations, just return the unoptimized trace unchanged:

do_optimize(FullTrace, FullTrace).

The missing operation now is if. An if statement needs special treatment, because it is a way where control flow can diverge from the trace. The trace is linear, therefore it can only record one of the two possible paths. When executing the trace it is possible for the other path to be taken. Therefore we need to make sure that the same conditions that were true or false during tracing are still true or false during the execution of the trace. This is done with a guard operation, which checks for this condition. The following rule implements it:

trace(if(V, L1, L2), Env, T, TraceAnchor) :-

lookup(V, Env, Val),

(Val == 0 ->

L = L2, T = guard_false(V, [], L1, NT)

;

L = L1, T = guard_true(V, [], L2, NT)

),

trace(jump(L), Env, NT, TraceAnchor).

It is very similar to the interp rule of if. The rule inserts a guard_true into the case, if the condition is true, and a guard_false if the condition is false. The arguments of the guard are: The variable that is being guarded, an empty list (the reason for that will be explained in a later post), the label where execution needs to continue when the guard fails and the rest of the trace.

Let's also add a small helper predicate that can be used to conveniently start tracing:

do_trace(L, Env) :-

block(L, StartBlock),

trace(StartBlock, Env, ProducedTrace, traceanchor(L, ProducedTrace)).

The predicate takes a label and an environment and executes the label with the given environment by first producing a trace, then executing the trace and eventually jumping back to interpretation, if a guard fails. It does this by reading the code at label L with the block statement, and then calling trace with an unbound variable ProducedTrace to hold the trace and a trace anchor that contains the label where tracing started and the produced trace variable.

With that predicate and the trace so far we can already trace the power implementation from the last blog post, just not execute the trace (which we will do in the next section):

?- do_trace(power_rec, [res/1, x/10, y/20]).

trace

op2(res,mul,var(res),var(x),op2(y,sub,var(y),const(1),guard_true(y,[],power_done,loop)))

opttrace

op2(res,mul,var(res),var(x),op2(y,sub,var(y),const(1),guard_true(y,[],power_done,loop)))

...

The computed trace is:

op2(res,mul,var(res),var(x), op2(y,sub,var(y),const(1), guard_true(y,[],power_done, loop)))

which is exactly the content of the loop from power_rec. Note how the if is turned into a guard_true which jumps to power_done if the guard fails.

A real tracing system would need a way for the tracer to get started, e.g. by doing profiling in an interpreter and starting the tracer for labels that are jumped to often. Also, traces for the same label are usually cached in some way. These details are left out in this simple model.

Executing Traces

In a real tracing system, the traces would be turned into machine code and executed by the CPU. In our small model, we will simply write another interpreter for them. This interpreter is very simple and looks again very similar to interp.

runtrace(op1(ResultVar, Op, Arg, Rest), Env, TraceFromStart) :-

resolve(Arg, Env, RArg),

do_op(Op, RArg, Res),

write_env(Env, ResultVar, Res, NEnv),

runtrace(Rest, NEnv, TraceFromStart).

runtrace(op2(ResultVar, Op, Arg1, Arg2, Rest), Env, TraceFromStart) :-

resolve(Arg1, Env, RArg1),

resolve(Arg2, Env, RArg2),

do_op(Op, RArg1, RArg2, Res),

write_env(Env, ResultVar, Res, NEnv),

runtrace(Rest, NEnv, TraceFromStart).

These rules are completely equivalent to the interp rules for op1 and op2. runtrace needs an extra argument, TraceFromStart, which is always just handed over to the recursive call of runtrace.

When the end of the trace is reached and the loop statement is encountered, we simply start from the beginning:

runtrace(loop, Env, TraceFromStart) :-

runtrace(TraceFromStart, Env, TraceFromStart).

The remaining question is what to do when encountering guards. In that case the guard condition needs to be checked. If the guard succeeds, executing the trace can continue. Otherwise the trace is aborted and the interpreter resumes execution:

runtrace(guard_true(V, ResumeVars, L, Rest), Env, TraceFromStart) :-

lookup(V, Env, Val),

(Val == 0 ->

resume_interp(Env, ResumeVars, L)

;

runtrace(Rest, Env, TraceFromStart)

).

runtrace(guard_false(V, ResumeVars, L, Rest), Env, TraceFromStart) :-

lookup(V, Env, Val),

(Val == 0 ->

runtrace(Rest, Env, TraceFromStart)

;

resume_interp(Env, ResumeVars, L)

).

resume_interp(Env, [], L) :-

block(L, Block),

interp(Block, Env).

Note how the execution is handed over to the interpreter at the label that was encoded as the third argument in the guard operation. What the ResumeVars are for we will see in a later post. For now we assume that it is always an empty list.

With this interpreter for traces we can now trace and then execute the example:

:- do_trace(power_rec, [res/1, x/10, y/20]).

trace

op2(res,mul,var(res),var(x),op2(y,sub,var(y),const(1),guard_true(y,[],power_done,loop)))

opttrace

op2(res,mul,var(res),var(x),op2(y,sub,var(y),const(1),guard_true(y,[],power_done,loop)))

100000000000000000000

Of course this is example is not very exciting, because the trace looks more or less exactly like the original code as well. There will be more exciting examples in a later post.

Extension: Promotion

As it is, the tracer does not actually add much to the interpreter. It linearizes control flow, but nothing deeply advanced happens. In this section I will add a crucial but simple to implement extension to the control flow language that allows the tracer to do more interesting things. This extension is called promotion.

Promotion is basically a hint that the programmer can add to her control flow graph program. A promotion is an operation promote(V, L) that takes a variable V and a label L. When the interpreter runs this statement, it simply jumps to the label L and ignores the variable:

interp(promote(_, L), Env) :-

interp(jump(L), Env).

However, the tracer does something much more interesting. For the tracer, the promote statement is a hint that it would be very useful to know the value of V and that the rest of the trace should keep that value as a constant. Therefore, when the tracer encounters a promotion, it inserts a special kind of guard called guard_value

trace(promote(V, L), Env, guard_value(V, Val, [], L, T), TraceAnchor) :-

lookup(V, Env, Val),

trace(jump(L), Env, T, TraceAnchor).

The guard_value is an interesting operation, because it freezes the current value FVal of variable V into the trace. When the trace is executed, the guard checks that the current value of the variable and the frozen value are the same. If yes, execution continues, if not, the trace is aborted:

runtrace(guard_value(V, FVal, ResumeVars, L, Rest), Env, TraceFromStart) :-

lookup(V, Env, Val),

(Val == FVal ->

runtrace(Rest, Env, TraceFromStart)

;

resume_interp(Env, ResumeVars, L)

).

What can this operation be used for? It's a way to communicate to the tracer that variable V is not changing very often and that it is therefore useful to freeze the current value into the trace. This can be done even without knowing the value of V in advance.

Let's look at a (slightly contrived) example:

l:

c = i >= 0

if c goto b else goto l_done

l_done:

print_and_stop(var(i))

b:

promote(x, b2)

b2:

x2 = x * 2

x3 = x2 + 1

i = i - x3

goto l

Encoded in Prolog syntax:

block(l, op2(c, ge, var(i), const(0),

if(c, b, l_done))).

block(l_done, print_and_stop(var(i))).

block(b, promote(x, b2)).

block(b2, op2(x2, mul, var(x), const(2),

op2(x3, add, var(x2), const(1),

op2(i, sub, var(i), var(x3),

jump(l))))).

This is a simple loop that counts down in steps of x * 2 + 1, whatever x might be, until i >= 0 is no longer true. Assuming that x doesn't change often, it is worth to promote it to be able to constant-fold x * 2 + 1 to not have to redo it every iteration. This is done with the promotion of x (of course optimizing this loop with loop invariant code motion would work as well, because x doesn't actually change during the loop).

To trace this, we can run the following query:

?- do_trace(b, [i/100, x/5]).

trace

guard_value(x,5,[],b2,op2(x2,mul,var(x),const(2),op2(x3,add,var(x2),const(1),op2(i,sub,var(i),var(x3),op2(c,ge,var(i),const(0),guard_true(c,[],l_done,loop))))))

opttrace

guard_value(x,5,[],b2,op2(x2,mul,var(x),const(2),op2(x3,add,var(x2),const(1),op2(i,sub,var(i),var(x3),op2(c,ge,var(i),const(0),guard_true(c,[],l_done,loop))))))

-10

Writing the trace in a more readable way:

guard_value(x,3,[],b2,

op2(x2,mul,var(x),const(2),

op2(x3,add,var(x2),const(1),

op2(i,sub,var(i),var(x3),

op2(c,ge,var(i),const(0),

guard_true(c,[],l_done,

loop))))))

After the guard_value the operations performed on x could be constant-folded away, because the guard ensures that x is 5 before execution continues. To actually do the constant-folding we would need some optimization component that optimizes traces. This will be done in the next blog post.

In this section I mostly talked about how promotion is realized in the tracer, not what and how to use to use it for. Promotion is one of the most important ingredients that's responsible for the success of PyPy's tracing approach. How this works is discussed in detail in the paper "Runtime feedback in a meta-tracing JIT for efficient dynamic languages".

Conclusion

In this blog post we have seen a very minimalistic tracer and an interpreter for the produced traces. The tracer is very much like the original interpreter, it just also keeps track of which operations were executed, in addition to executing the program. Tracing stops when a loop is closed, then the trace can be optimized and run. Running a trace continues until a failing guard is hit. At that point, execution goes back to the normal interpreter (and stays there, in this very simple implementation).

I also presented an extension of tracing that makes it possible to add a hint called promote to the original program that tells the tracer to feed back a runtime value into the trace and freeze it there. This extension would be impossible to do in the partial evaluator from the last post, because partial evaluation is done strictly before run time, so if a variable isn't already known, its likely runtime value cannot be found out.

In the next post I will show how to optimize traces before executing them and how the optimizer for traces is related to partial evaluation.

Hi, these posts are great!

A question: shouldn't runtrace resume tracing instead of running the interpreter (in resume_interp)?

And perhaps a clarification: when the blog post calls do_trace all of the necessary code has not been shown yet, so one can't really follow along at the keyboard there just yet.

@larsr: thanks!

Yes, in principle you are right that there could be a mechanism that stars to trace from the point where a guard fails. This is an element of tracing JITs that the current code leaves off, it would need to be solved together with the caching of traces.

A lot of things are just sketched in this implementation, e.g. only one trace ever is started, once you end up in the interpreter the tracer never starts again.

trace(if(V, L1, L2), Env, T, TraceAnchor) :-

lookup(V, Env, Val),

(Val == 0 ->

L = L2, T = guard_false(V, [], L1, NT)

;

L = L1, T = guard_true(V, [], L2, NT)

),

trace(jump(L), Env, NT, TraceAnchor). This trac is okay, but python IDE is not Adroid supported. ビーグレン

NumPyPy status update

Hello.

This is just a quick status update on the NumPy in PyPy project that very recently became my day job. I should give my thanks once again to Getco, Nate Lawson and other contributors who donated above $40000 towards the goal.

Recently we (Alex Gaynor, Matti Picus and me) implemented a few interesting things that a lot of people use:

- more ufuncs

- most ufuncs now accept the axis parameter (except all and any)

- fixed string representation of arrays, now it's identical to numpy (uses pretty much the same code)

- ndarray.flat should be working correctly

- ndarray.flatten, ndarray.ravel, ndarray.take

- indexing arrays by boolean arrays of the same size

- and various bugfixes.

We would also like to introduce the nightly report of numpy status. This is an automated tool that does package introspection. While it gives some sort of idea how much of numpy is implemented, it's not by far the authority. Your tests should be the authority. It won't report whether functions support all kinds of parameters (for example masked arrays and out parameter are completely unsupported) or that functions work at all. We also reserve the right to incorporate jokes in that website, so don't treat it that seriously overall :-)

Thanks, and stay tuned. We hope to post here regular updates on the progress.

Cheers,

fijal & the PyPy team

I use "out" parameter very often in my code (with numpy.take), without this one my code would run much worse (because huge arrays of hundreds MB would copy many times inside a big cycle). How currently the "out" parameter is handled (warning, error, nothing)?

It just errors with more or less acceptable error message. Note that pypy does not create intermediates for most of operations, so if you have a lot of them chained actually using out will be worse than not using it.

I'm new to python but not to Cpython/numpy/scipy/matplotlib and I fail to understand what you are doing.

* In a nutshell, what's numpypy? Is it a rewrite of the numpy code to make it compatible with pypy? or are you working on pypy itself to be able to run numpy as it is??

* if numpypy is a rewrite of numpy, that's good but how do you plan to keep numpy and numpypy sync (in terms of functionalities)??

* Using numpy with pypy will be great but what about scipy qnd matplotlib??

Many users need at least these two modules on top of numpy;

I would be very happy with pypy being able to work with unpachted numpy/scipy/matplotlib.

I think your website should summarise these issues on its front page.

Py3k and Numpy First Stage: Thanks to all who Gave

Last year was quite successful for PyPy fundraising through the Software Freedom Conservancy, and Conservancy and PyPy are very excited to announce that enough was raised to begin the first stages on the Py3k and Numpy grant proposals.

As of the end of 2011, 135 different individuals gave to the Py3k campaign, and 114 to the Numpy campaign. We thank each of you who donated to help make this work possible. Meanwhile, if you haven't given to support these projects, we do hope you'll give generously now to help fund their second stages later this year!

We're also particularly excited that a few donors gave particularly large donations to support this work; those big donations really filled in the gap to help us get started!

Specifically, we're pleased to announce that Google donated $35000 towards implementing Python 3 in PyPy. Google's general support of the Python community is well known, and their specific support of our grant proposal is much appreciated.

Meanwhile, Numpy was supported in part by contributions from Nate Lawson, Cantab Capital Partners, and Getco, as well as more than a hundred other contributors.

With these donations combined with many others, we're now starting work on both projects. This week, the Conservancy signed contracts with Antonio Cuni and Benjamin Peterson to work towards the Stage 1.1 goals in Py3k proposal (and is negotiating for another contractor as well), and with Maciej Fijałkowski to work towards the Stage 1 goals in the Numpy proposal.

In 2012, PyPy will continue regular sprint meetings, at which Py3K and Numpy efforts will certainly have a place. We have some limited funds to fund travels of contributors to those meetings.

We're very thankful for all who donated so far to support these efforts, and we hope that now that work has begun, even more donors will come forward to help us finish the job. In the meantime, watch for the commits showing up from these developers and other contributors in the PyPy repositories!

Cheers, The PyPy Team

It seems strange to me that Amaury Forgeot d'Arc wasn't the first one to be contracted for working on Py3k support. From the commit messages, he seems to have done most of the work in the py3k branch so far, or is he the unnamed third contractor?

What about a Py2k8, is there any hope? Will at least 2.7 still be supported?

@Gaëtan: The reason is simple: I already have a regular day job, 40 hours a week, and I cannot have another remuneration without consent of my employer.

Actually I started the py3k branch before the funding proposal, and even before that I've been trying different ways to do the transition from str to unicode.

Then, my understanding of the JIT and other optimizations is very poor. And there are important changes to do around the representation of unicode for example, or the int/long unification, if we want pypy3k to be as fast as 2.7.

I am quite happy of the current state: some people are paid to do and finish the real job, and volunteers can have fun and help in some parts, working on the most interesting project around Python.

You might consider leaving the u prefix in--PEP 414 puts it back, and it just got accepted.

https://www.python.org/dev/peps/pep-0414/

It's cleaner to flush them out - the forward porting effort is targeting 3.2, so the stdlib should respect that. (i.e. if it runs on PyPy by default, it should run on CPython 3.2 as well).

Otherwise we'll end up with confusing cases of "this runs on 3.2 in PyPy, but CPython reports a syntax error"

For importing support, you may want to look at the current state of the 3.3 importlib implementation. Brett's on the verge of hooking that up as CPython's native import system - it should be possible for PyPy3k to use it as well.

@Larry: yes, I've considered that, but as Nick says we are targeting 3.2, so it's much easier to just kill it in the meantime. Adding it back will be very easy.

@Nick: yes, using importlib is something which I also have considered. However, I'd prefer not to diverge too much from the "default" branch (because we are going to regularly merge default into py3k for a long time). I suppose that as long as the current importing logic works fine, we'll keep it :-)

The u"" syntax is coming back.